Did you click a link from the newsletter expecting a post on ESB Toolkit 2.2? Head right over here. Otherwise, keep reading!

This post is the sixteenth in a weekly series intended to briefly spotlight those things that you need to know about new features in BizTalk Server 2013. It is also the fourth part of a five-part series on REST support in BizTalk Server 2013.

If you haven’t been following the REST series, stop reading right now, and then read from the beginning of the series. I’m not re-covering any already covered ground in this post. ![]()

In this post we will discuss dealing with incoming JSON requests, such that they become readable/map-able XML for which we can generate a schema. Ultimately there are two ways that this can be accomplished. Both of these methods have actually already been extensively documented. The first method is by using a custom pipeline component (demonstrated here by Nick Heppleston), the second is by extending WCF with a custom behavior (briefly discussed here by Saravana Kumar).

Both of the articles linked in the above paragraph really only deal with the receive side of the story. In this article I will set out to demonstrate both receiving JSON data, and responding with JSON data. In the process I will demonstrate two methods of dealing with cross-domain requests (CORS and JSONP), and build pipeline components and pipelines to handle both scenarios.

Getting Started with a Custom Pipeline Component

In order to get started with custom pipeline component development, I will be reaching again to the BizTalk Server Pipeline Component Wizard (link points to the patch for BizTalk Server 2013, which requires InstallShield LE and an utterly maddening registration/download process). I am going to use the wizard to create two projects – one for a JsonDecoder pipeline component and one for a JsonEncoder pipeline component.

Each of these projects will be taking a dependency on Newtonsoft’s excellent Json.NET library for the actual JSON serialization/deserialization. This will ultimately be done through the official NuGet package for Json.NET.

Since we can have JSON data that starts as just a raw list of items, we likely want to wrap a named root item around that for the purpose of XML conversion. In order to choose the name for that root node, we will rely on the name of the operation that is being processed. However, in the case of a single operation with a lame name, we can expose a pipeline component property to allow overriding that behavior.

Another pipeline component property that would be nice to expose is a target namespace for the XML that will be generated.

Creating XML from JSON Data

Creating XML from JSON formatted data is actually supported out of the box with the JSON.NET library. We can do so with the following code:

[sourcecode language=”csharp”]var jsonString = jsonStream.Length == 0

? string.Empty

: Encoding.GetEncoding(inmsg.BodyPart.Charset ?? Encoding.UTF8.WebName)

.GetString(jsonStream.ToArray());

// Name the root node of the generated XML doc after the operation, unless

// a specific name has been specified via the RootNode property.

var rawDoc = JsonConvert.DeserializeXmlNode(jsonString,

string.IsNullOrWhiteSpace(this.RootNode)

? operationName

: this.RootNode, true);

// Here we are ensuring that the custom namespace shows up on the root node

// so that we have a nice clean message type on the request messages

var xmlDoc = new XmlDocument();

xmlDoc.AppendChild(

xmlDoc.CreateElement(DEFAULT_PREFIX, rawDoc.DocumentElement.LocalName, this.Namespace));

xmlDoc.DocumentElement.InnerXml = rawDoc.DocumentElement.InnerXml;[/sourcecode]

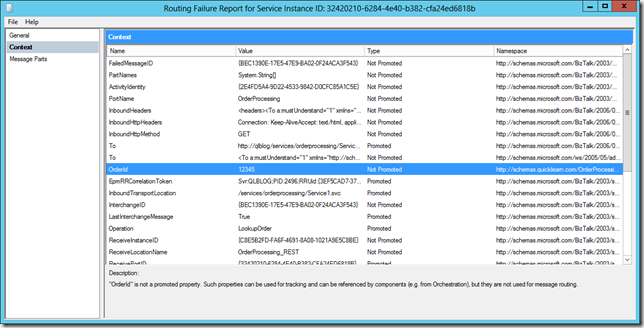

In the above snippet, you are seeing the operationName variable out of context. That variable will contain the value of the current operation requested (which is defined by you within the adapter properties for the WCF-WebHttp adapter, and then matched at runtime to the HTTP Method and URL requested).

Another weird thing that we are doing here is making sure that we have full control over the root node, by allowing it to be generated by the JSON.NET library, and then replacing it with our own namespace-qualified root node.

Creating JSON from XML Data

Taking XML data and generating JSON formatted data is nearly as easy:

[sourcecode language=”csharp”]XmlDocument xmlDoc = new XmlDocument();

xmlDoc.Load(inmsg.BodyPart.Data);

if (xmlDoc.FirstChild.LocalName == "xml")

xmlDoc.RemoveChild(xmlDoc.FirstChild);

// Remove any root-level attributes added in the process of creating the XML

// (Think xmlns attributes that have no meaning in JSON)

xmlDoc.DocumentElement.Attributes.RemoveAll();

string jsonString = JsonConvert.SerializeXmlNode(xmlDoc, Newtonsoft.Json.Formatting.Indented, true);[/sourcecode]

There are a few lines of codes there dedicated to dealing with some quirks in the process. Namely if you do not remove both the potentially pre-pended “xml” processor directive node, or the namespace attributes on the root node, these will show up in the JSON output – yikes!

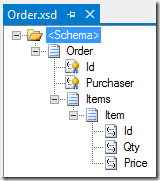

Creating Schemas for JsON Requests and Responses

Assuming that the data will be in XML form whenever BizTalk gets a hold of it, how can we generate a representative schema without much pain? Our best bet will be simply taking some JSON, throwing it through the same process above, and then generating a schema from the resulting XML.

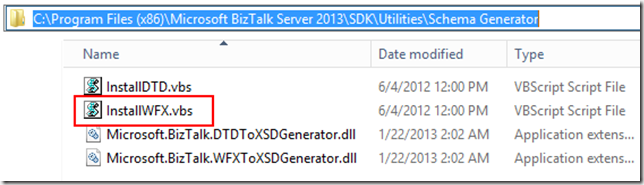

If you haven’t already, you will need to install the Well Formed XML schema generator by heading over to the C:Program Files (x86)Microsoft BizTalk Server 2013SDKUtilitiesSchema Generator folder and then running the InstallWFX.vbs script that you will find there:

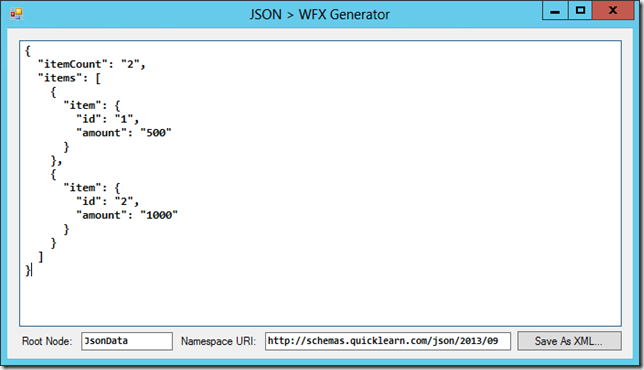

In order to make it easier to run some JSON formatted data through this process, I created a quick no-frills sample app that can do the conversion and save the result as an XML file:

Once we have the output of that application, we can run it through the schema wizard, and end up with a schema that looks somewhat like the following (for which much polishing is required with regards to the selection of data types):

Dealing with Cross-Site Requests

Modern browsers will be quite leery of allowing for cross-domain requests (i.e., requests originating from a site on one domain which target a service on a different domain). That is not to say, however, that every instance of this is inherently bad.

One of the things that I wanted to make sure of, is that I could publish a service which would allow for requests originating from a different domain. There are two common approaches for allowing this to happen.

One of them is handled at the http level through specialized headers that are passed through a series of requests to ensure that a service is willing to be called from a different domain. This method is known as CORS or Cross-Origin Resource Sharing.

The other method is much more hacky and really ought to be reserved for those cases where the browser can’t cope with CORS. This other method is JSONP (JSON with Padding).

Examining CORS

Let’s start this whole discussion by taking a look at CORS first. Assume that we have the following code:

[sourcecode language=”javascript”]var request = $.ajax({

url: $("#serverUrl").val(),

type: ‘POST’,

contentType: ‘application/json’,

data: JSON.stringify(data),

processData: false,

dataType: ‘json’

})

[/sourcecode]

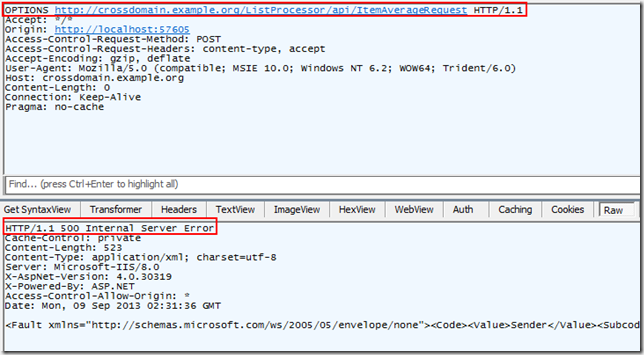

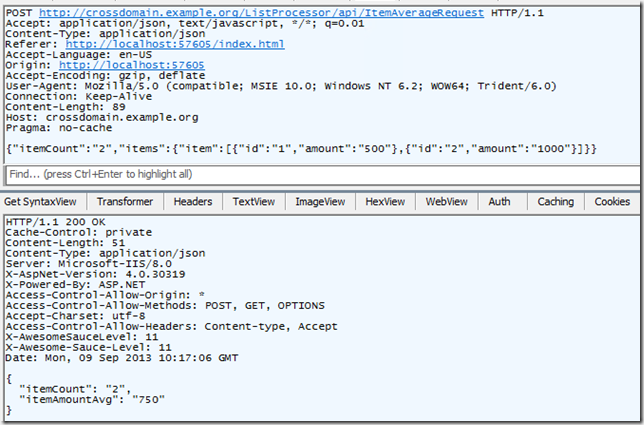

Now assume that the item on the page with the id serverUrl contains a URL for a completely external site. If that is the case, and you’re using a modern browser that supports CORS, you will see something like this if you watch your HTTP traffic:

Before the actual request is sent (with the data posted in the body), a simple OPTIONS request is issued to determine what is possible with the resource that is about to be requested. Additionally it includes Access-Control-Request headers that indicate which HTTP Method is desired, and which headers are desired (to be sent). Here the server must respond in kind saying that it allows requests from the origin domain (notice the origin header above calling out the site originating the request), and saying that it supports the Method/Headers that the origin site is interested in.

If it does not respond in the correct manner, the conversation is over, and errors explode all over the place.

Getting back to the BizTalk world for a second, if your REST Receive Location isn’t expecting this, you will see this lovely response:

Technically, you wont’ see any Access-Control headers at all in the response (I took the screenshot after un-configuring some things, but apparently I missed that one). Ultimately the error comes down to a contract mismatch. More specifically it will read something like this:

The message with To ‘http://your.service.url.here.example.org/service.svc/operation cannot be processed at the receiver, due to an AddressFilter mismatch at the EndpointDispatcher.

This OPTIONS request actually needs to become part of your contract for each endpoint for which you wish to allow cross-domain communications. Additionally, you have to be ready to provide a happy and valid response for this message.

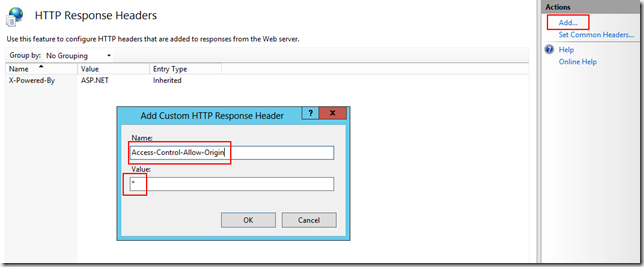

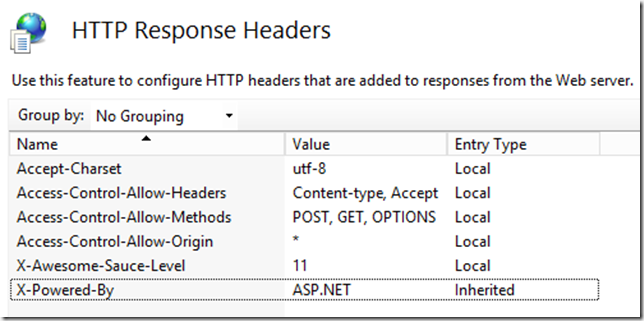

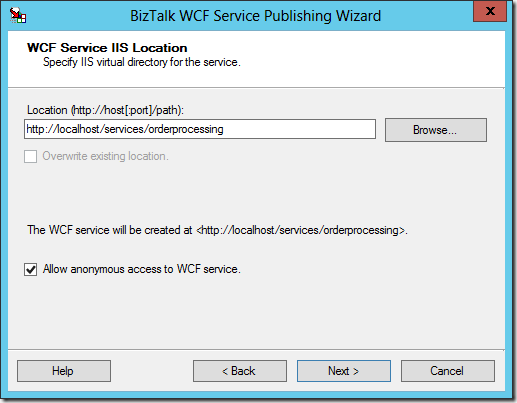

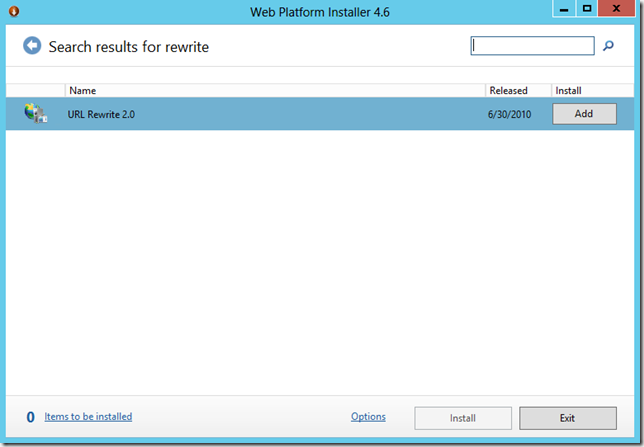

Configuring IIS to Send CORS Headers

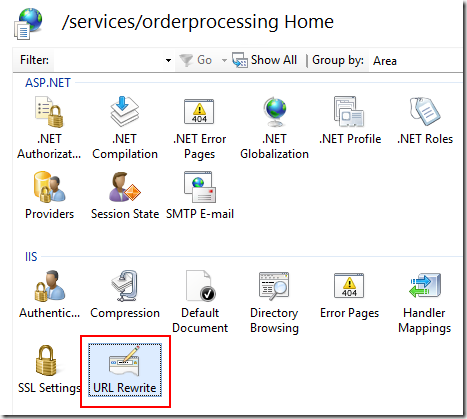

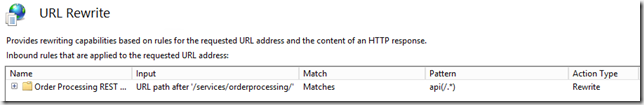

Ultimately there’s a few ways we could inject the headers required to make CORS happen. I’m going to go with having IIS inject those headers at the server level (rather than doing it at the adapter configuration level). I am going to do this at the level of the virtual directory created by the WCF Service publishing wizard when I published my REST endpoint. Examining the Features View for that virtual directory we should find HTTP Response Headers under the IIS group:

From there, I can begin adding the headers for those things that I would like to allow:

Above, I’m setting a header which indicates that I wish to allow requests from any origin domain. After some mucking around with fiddler to find out what my little test page would actually be requesting using IE, I ended up with this set of headers that seemed to make the world happy:

Pesky Empty Messages

Even with the correct headers being sent back in the response, the response code will still be 500 until we can properly deal with the incoming empty OPTIONS message, and ensure that we are routing a response back such that a 200 response code is returned.

In BizTalk Server the easiest way to get a message from the receive pipeline to route back to the send pipeline is through the RouteDirectToTP property. This is the same property that ensures that EDI Acknowledgements are routed directly back to the trading partner on the send pipeline of the receive port, and the same property used by the ESB Toolkit to create the Forwarder pipeline component (short circuiting a default instance subscription for messaging-only request/response service requests, and restoring it after multiple requests have been made).

So how are we going to use it here? Well we’re going to detect if we’re dealing with a CORS message in the pipeline. If we are, then we are going to promote that property with a value of “true” to indicate that we want that empty message to flow right back to the response pipeline as the empty body of our lovely 200 OK response. The code for that looks a little something like that (use your imagination or github for the values of those constants):

[sourcecode language=”csharp”]#region Handle CORS Requests

// Detect if the incoming message is an HTTP CORS request

// http://www.w3.org/TR/cors/

// If it is, we will promote both the RouteDirectToTP property and the

// EpmRRCorrelationToken so that the request is immediately routed

// back to the send pipeline of this receive port

object httpMethod = null;

httpMethod = inmsg.Context.Read(HTTP_METHOD_PROPNAME, WCF_PROPERTIES_NS);

if (httpMethod != null && (httpMethod as string) == OPTIONS_METHOD)

{

object curCorrToken = inmsg.Context.Read(EPM_RR_CORRELATION_TOKEN_PROPNAME, SYSTEM_PROPERTIES_NS);

inmsg.Context.Promote(EPM_RR_CORRELATION_TOKEN_PROPNAME, SYSTEM_PROPERTIES_NS, curCorrToken);

inmsg.Context.Promote(ROUTE_DIRECT_TO_TP_PROPNAME, SYSTEM_PROPERTIES_NS, true);

var corsDoc = new XmlDocument();

corsDoc.AppendChild(corsDoc.CreateElement(DEFAULT_PREFIX, CORS_MSG_ROOT, JSON_SCHEMAS_NS));

writeMessage(inmsg, corsDoc);

return inmsg;

}

#endregion[/sourcecode]

The reason that we are bothering to create an XML document out of this empty message is that this decode component will be followed by an XML Disassemble component that will need to recognize the data. By the end of this post we will have two special case XML Documents for which we will create schemas. In the case of this CORS message, we will need to ensure that the JsonEncoder returns this XML as an empty response body. The following code takes care of this other side of the equation:

[sourcecode language=”csharp”]#region Handle CORS Requests

// Detect if the incoming message is an HTTP CORS request

// http://www.w3.org/TR/cors/

object httpMethod = null;

httpMethod = inmsg.Context.Read(HTTP_METHOD_PROPNAME, WCF_PROPERTIES_NS);

if (httpMethod != null && (httpMethod as string) == OPTIONS_METHOD)

{

// Remove the message body before returning

var emptyOutputStream = new VirtualStream();

inmsg.BodyPart.Data = emptyOutputStream;

return inmsg;

}

#endregion[/sourcecode]

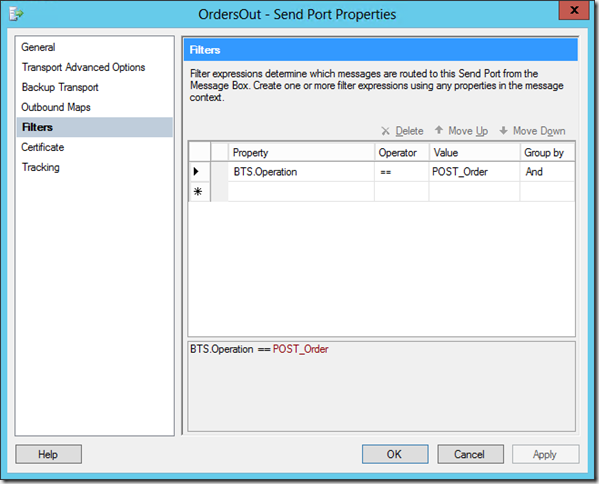

At this point we should be able to receive the options message through the receive pipeline, and have it property route back through the response pipeline. Beyond that, we just need to make sure that we pair our actual resources, within the operation mapping of the adapter config, with mappings for the CORS request:

<Operation Name="CrossDomainCheck" Method="OPTIONS" Url="/ItemAverageRequest" /> <Operation Name="ItemAverageRequest" Method="POST" Url="/ItemAverageRequest" />

Examining JSONP

Well that was one way that we could allow for cross-domain requests – requests that allowed for the POSTing of lots of wonderful data. But what if we just want to get some data, we don’t want to waste HTTP requests, and we’re not using a browser that supports CORS (or maybe we just want to live dangerously)? There is an alternative – JSONP.

Essentially this is a cheap hack that got its own acronym to legitimize how terrible of an idea it actually is.

I think the best way to explain this one is to examine a sample code snippet, and then the conversation that we want to get out of it:

[sourcecode language=”javascript”]var request = $.getJSON($("#serverUrlJsonp").val() + "?callback=?");[/sourcecode]

Notice the ?callback=? portion of that snippet. It’s appending a querystring parameter named callback to the URL with a value to be filled in by jQuery. So what will it fill in? A randomly generated function for receiving data back from the server. But how is the server going to execute local code within the browser you might ask? Let’s take a look:

jQuery seems to populate the callback querystring parameter with a suitably random value. Check out what happens in the response. The response is a function call to that randomly generated callback function. The way JSONP is cheating the system to get a cross-domain request working is by injecting a script node into the DOM with the source set to the service (passing the callback name in the querystring). Then as the service responds, the response is executed as JavaScript code. This is definitely one where you’re putting a lot of trust in the service.

So how do we make it work with BizTalk?

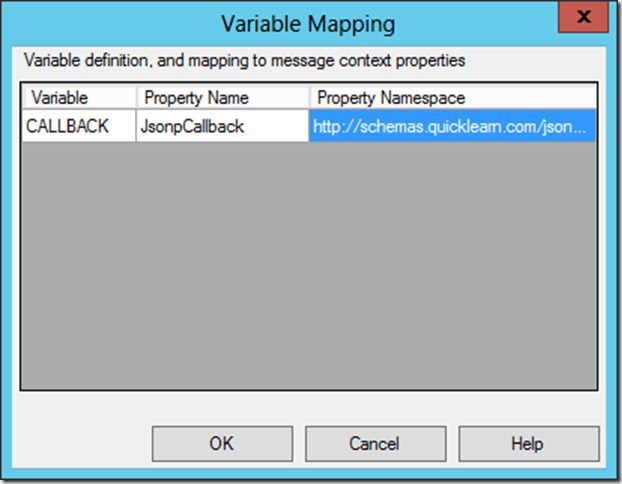

Capturing the JSONP Callback Name on Receive

JSONP’s ability to work depends on the ability of the service to craft a call to the callback method named by the callback querystring parameter. The easiest way to capture this is through an operation mapping combined with variable mapping:

<Operation Name="GetItemListJsonp" Method="GET" Url="/ItemsList?callback={callback}" />

Essentially what we’re doing here is relying on the adapter to promote the callback function’s name to the context of the message, so that it will be available as we generate the response JSON.

Pipeline Component Changes to Deal with JSONP

In order to deal properly with JSONP, we will have to make some minor modifications to both the JsonDecoder and the JsonEncoder pipeline components. Starting with the JsonDecode component which will be executing upon initial receipt of our request message, we will add the following code:

[sourcecode language=”csharp”]#region Handle JSONP Request

// Here we are detecting if there has been any value promoted to the jsonp callback property

// which will contain the name of the function that should be passed the JSON data returned

// by the service.

object jsonpCallback = inmsg.Context.Read(JSONP_CALLBACK_PROPNAME, JSON_SCHEMAS_NS);

string jsonpCallbackName = (jsonpCallback ?? (object)string.Empty) as string;

if (!string.IsNullOrWhiteSpace(jsonpCallbackName))

{

var jsonpDoc = new XmlDocument();

jsonpDoc.AppendChild(jsonpDoc.CreateElement(DEFAULT_PREFIX, JSONP_MSG_ROOT, JSON_SCHEMAS_NS));

writeMessage(inmsg, jsonpDoc);

return inmsg;

}

#endregion[/sourcecode]

This code is examining the callback context property to determine if there is any value promoted. If there is, it is assumed that this is a JSONP request. It also assumes that all JSONP requests will have an empty body, and as such will require a placeholder node in order to make it through the following XmlDisassembler.

On the response side, these are the modifications made to the JsonEncoder component:

[sourcecode language=”csharp”]#region Handle JSONP Request

// Here we are detecting if there has been any value promoted to the jsonp callback property

// which will contain the name of the function that should be passed the JSON data returned

// by the service.

object jsonpCallback = inmsg.Context.Read(JSONP_CALLBACK_PROPNAME, JSON_SCHEMAS_NS);

string jsonpCallbackName = (jsonpCallback ?? (object)string.Empty) as string;

if (!string.IsNullOrWhiteSpace(jsonpCallbackName))

jsonString = string.Format("{0}({1});", jsonpCallbackName, jsonString);

#endregion[/sourcecode]

Here we’re jumping in at the last minute, before the JSON formatted data is returned, and wrapping the whole thing in a function call – if the callback function name exists in the context.

Building a Simple Proof of Concept Service

After burning an evening on these components, I decided it would only be worthwhile if I could actually use them to do something interesting.

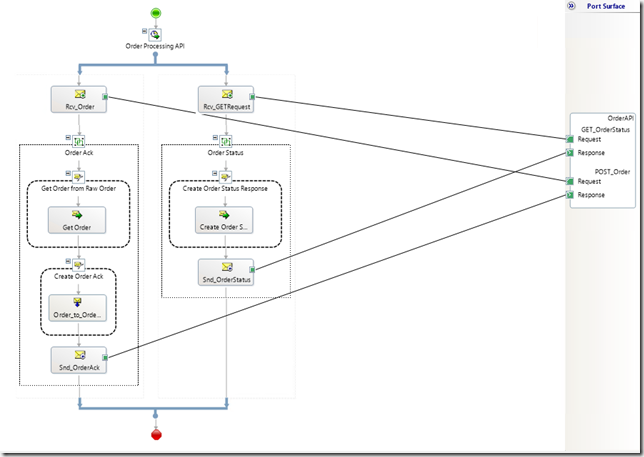

The parameters I set out for myself was that I have to have a service that could be called cross-domain from a simple .html page with only client-side JavaScript goodness. As a result I resurrected my dual listener orchestration anti-pattern from one of my previous posts (a hijacked convoy pattern?) to create the following monstrosity:

So what’s going on here, and what does this service allow me to do? Well it exposes two resources, (1) ItemsList and (2) ItemAverageRequest. They both deal in “items”. There really is no meaning for what an item is, I just needed some thing to test with, and item was a nice fit.

The first resource, ItemsList is something that you can GET. As a result it will return a nice JSON formatted list of “items” each having an “amount” of some kind and a unique “id”.

The ItemAverageRequest resource is a place where you can POST a new ItemAverageRequest (really this is just a list of items exactly as returned by the first resource), and as a result you will receive the cumulative average “amount” for the items in the list.

The maps in the orchestration shown above ensure that these resources have the described behavior.

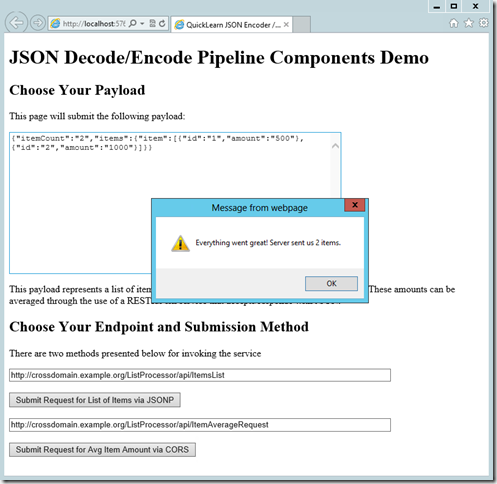

Testing the Service

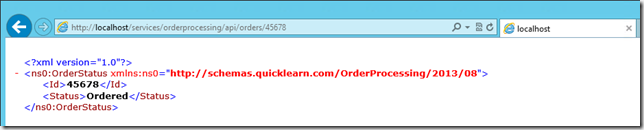

So did all of this work pay off, and give us happy results? I built a simple test client to find out. Here’s what the JSONP request looks like against the ItemsList resource (the textarea in the background contains the raw response from the service):

The raw HTTP conversation for this request was actually shown above in the Fiddler screenshot from the JSONP discussion.

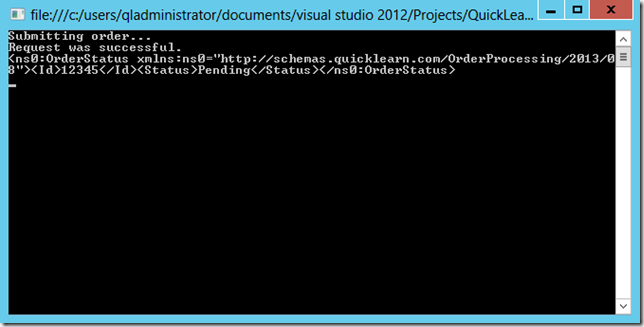

Leaving that data in the textarea as input, and then invoking the POST against the ItemAverageRequest resource yields the following:

The HTTP conversation for this request happened in two parts, represented below:

Summary

I really hope I was able to add something to the conversation around using JSON formatted data with the WCF-WebHttp adapter in BizTalk Server 2013. There’s certainly more to it than just getting the happy XML published to the MessageBox.

Was a pipeline component the right choice to handle protocol level insanity like CORS? Probably not, that part of it is something that we should have ultimately implemented by extending WCF and having the adapter deal with it — especially since CORS really doesn’t have anything to do with JSON directly. I’ll leave that to the commenters and other BizTalk bloggers out there to consider.

However, with JSONP, it becomes ultimately an output encoding issue, and since we had already dealt with one cross-domain communications issue in the pipeline, it was natural to handle CORS as well in the same fashion.

Before I sign off for the remainder of the week, I want to take this time to remind you that if you would like to take your BizTalk skills to the next level with BizTalk Server 2013, you should check out one of our upcoming BizTalk Server 2013 Deep Dive Classes, where you will learn all about fun stuff like custom pipeline component development and have an opportunity to get hands on with some of the new adapters!

If you would like to access sample code for this blog post, you can find it on github.